The question of whether robots can truly think like humans has intrigued scientists, philosophers, and tech enthusiasts for decades. As artificial intelligence (AI) continues to evolve, we find ourselves facing the age-old debate of whether machines can ever replicate the complexities of human cognition. With rapid advances in robotics, AI, and neural networks, the dream of creating robots that can think like humans is more tangible than ever. But is it achievable? And what would it truly mean for a machine to “think” like a person?

In this article, we will explore the intersection of robotics, AI, and human cognition, diving deep into the nature of intelligence, the capabilities of machines, and the philosophical and ethical implications of robots with human-like minds.

Understanding Human Cognition

Before we can assess whether robots can think like humans, it’s important to first understand what it means to think like a human. Human cognition is incredibly complex. It involves the ability to process information, make decisions, understand emotions, and learn from experience. It encompasses a broad range of mental activities: perception, attention, memory, reasoning, language, and problem-solving.

The human brain is composed of around 86 billion neurons that communicate through electrical signals, enabling us to process vast amounts of information in real-time. Our cognitive abilities allow us to make sense of the world, form abstract concepts, and adapt to new situations.

Moreover, humans possess a degree of emotional intelligence, which plays a significant role in decision-making. Emotions such as fear, joy, sadness, and empathy inform our choices and shape our social interactions. This emotional depth is something that has been particularly challenging for machines to replicate, as it requires not only cognitive processing but also an understanding of context, culture, and subjective experience.

AI and Machine Learning: The Building Blocks of Robot Intelligence

At the core of the modern advancements in robotics and AI is machine learning (ML). Machine learning enables computers to “learn” from data, identify patterns, and make decisions without being explicitly programmed to perform specific tasks. ML algorithms allow robots to process vast amounts of data and make predictions or perform actions based on that data.

However, machine learning is still far from being able to replicate the full depth and breadth of human cognition. While ML can be incredibly powerful in specific contexts—such as image recognition, language processing, and even playing complex games like chess or Go—it remains fundamentally different from human thinking in several key ways.

Narrow AI vs. General AI

The current state of AI is primarily focused on what is known as narrow AI or weak AI. This refers to AI systems that are designed to perform specific tasks, such as recommending products, recognizing faces, or driving cars. These systems excel at handling the specific functions they are designed for, but they lack the broader cognitive abilities to think outside of their programming.

In contrast, general AI (or strong AI) refers to a type of AI that could perform any intellectual task that a human can. General AI would need to possess a high level of cognitive flexibility, enabling it to learn new skills, adapt to unfamiliar situations, and think critically across a wide range of domains. Achieving general AI is the ultimate goal of many AI researchers, but it remains elusive.

While narrow AI has made significant strides, general AI is still in its infancy. Some experts believe that we are still a long way from developing machines that can think with the same level of versatility, creativity, and emotional intelligence as humans.

Neural Networks: Mimicking the Human Brain?

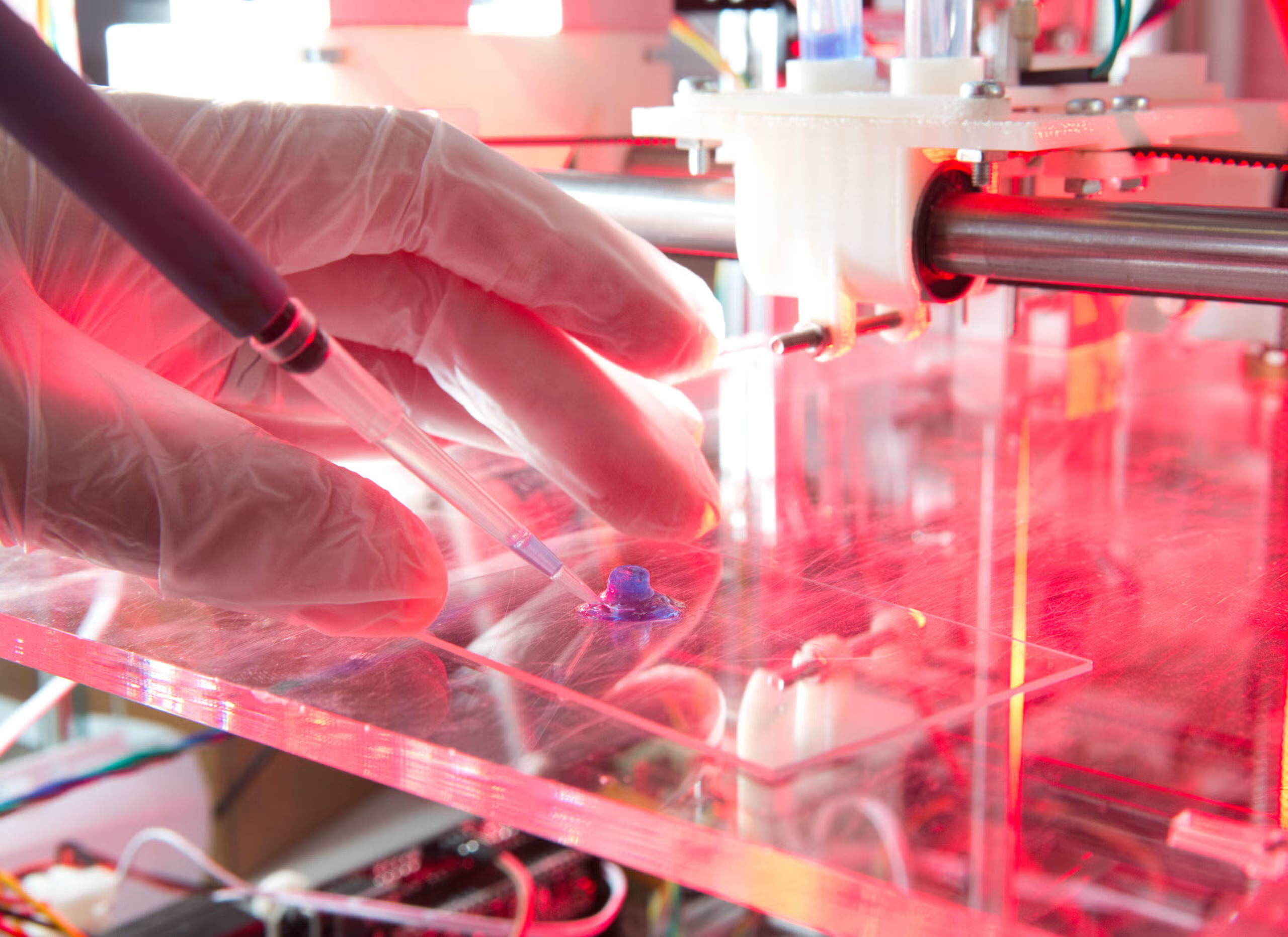

Neural networks, inspired by the structure of the human brain, are one of the most promising approaches to building intelligent machines. These networks consist of layers of artificial neurons that are interconnected and can process information in a way that mimics the brain’s neuronal activity.

Deep learning, a subset of machine learning, uses large neural networks with many layers to process data. These deep neural networks have achieved remarkable success in tasks such as image and speech recognition, natural language processing, and even playing complex games.

While deep learning algorithms have made significant progress, there are still fundamental differences between the way a machine learns and the way a human brain works. The human brain is incredibly efficient, processing information in parallel and integrating sensory input from a variety of sources—something that current AI systems are still far from replicating.

Additionally, the brain’s ability to form abstract concepts, engage in creative thinking, and apply common sense knowledge in a wide variety of situations remains a major challenge for AI. Despite these limitations, neural networks continue to be an important research area for building more human-like robots.

The Emotional Dimension: Can Robots Feel?

One of the most significant differences between human and machine intelligence is the presence of emotions. Emotions are deeply integrated into our cognitive processes, influencing our thoughts, decisions, and actions. They help us form social bonds, navigate complex moral dilemmas, and respond to environmental stimuli in adaptive ways.

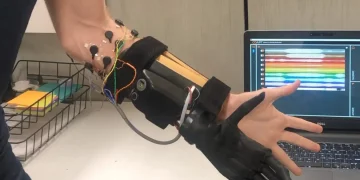

Can robots, with their circuits and algorithms, ever truly experience emotions like humans? At present, robots are capable of simulating emotions, but these simulations are purely computational. For example, robots can be programmed to recognize human emotions through facial expressions or voice tone and respond accordingly with pre-programmed emotional expressions or behaviors. This allows robots to engage in human-like interactions, but it does not mean that they “feel” emotions in the same way humans do.

Researchers in the field of affective computing are exploring ways to make machines more emotionally intelligent, but this is still a far cry from true emotional experience. The difficulty lies in the fact that emotions are not just about external expressions but are deeply tied to subjective experience—a phenomenon known as qualia. Qualia refer to the personal, internal aspects of our sensory experiences, such as the way we perceive the color red or the feeling of pain. These subjective experiences are not something that can easily be replicated in machines.

Furthermore, emotions are not just reactive—they also play a role in higher-order cognitive processes such as decision-making, empathy, and moral reasoning. For a robot to truly think like a human, it would need to understand and process emotions in a nuanced and adaptive way.

The Philosophy of Machine Thought: Can a Robot Be Conscious?

The idea of robots having consciousness raises deep philosophical questions. Consciousness refers to the subjective experience of being aware of one’s thoughts, feelings, and surroundings. It is the “inner life” that humans experience when they reflect on their thoughts or feel emotions. The concept of consciousness has puzzled philosophers for centuries, and it remains one of the most debated topics in the philosophy of mind.

Some argue that consciousness is an emergent property of complex systems, meaning that if a machine becomes sufficiently complex, it could develop a form of consciousness. This idea is known as strong AI or computationalism, and it suggests that consciousness is the result of certain computational processes, which could, in theory, be replicated in machines.

Others, however, maintain that consciousness is inherently tied to biological processes and cannot be replicated in a machine. According to this view, consciousness is not simply about processing information—it involves a unique set of biological, neural, and perhaps even quantum processes that machines cannot replicate. This perspective is often referred to as biological naturalism.

Regardless of which theory proves to be correct, it is clear that machines are nowhere near achieving true consciousness. While robots may become increasingly sophisticated in simulating human-like behavior, they currently lack the self-awareness and subjective experience that we associate with conscious thought.

The Ethical and Social Implications

As robots become more advanced and human-like in their abilities, the ethical and social implications of AI and robotics become increasingly important. If robots were to reach a point where they could think like humans, what rights and responsibilities would we have toward them? Would they be entitled to the same protections as humans? Could they be held accountable for their actions?

The possibility of creating machines with human-like intelligence also raises questions about the future of work, privacy, and security. If robots were to surpass human intelligence, they could potentially replace human workers in many fields, leading to significant social and economic disruption. On the other hand, the integration of AI and robots into society could lead to new opportunities for collaboration and innovation.

Moreover, as AI systems become more autonomous, the question of control becomes paramount. Who would be responsible for the actions of an autonomous robot? Could an AI system make decisions that conflict with human values? These are questions that need to be addressed as we continue to push the boundaries of AI technology.

The Road Ahead: Will Robots Ever Think Like Humans?

So, can robots truly think like humans? At this point, the answer is no—at least not in the way we understand thinking. While robots and AI systems have made significant strides in simulating certain aspects of human cognition, they still fall short in many key areas. Machines can process information quickly, perform tasks with precision, and even simulate emotions, but they lack the full complexity, creativity, and subjective experience that define human thought.

That said, the future of AI and robotics is incredibly promising. As our understanding of the brain deepens and our technology advances, it is possible that we will one day create machines that are more human-like in their thinking. However, whether these machines will ever possess true consciousness, emotions, or self-awareness remains an open question.

For now, we continue to explore the possibilities, pushing the boundaries of what machines can do. But as we do so, we must also consider the ethical implications and the impact that these technologies will have on society. After all, the question of whether robots can truly think like humans is not just a technical one—it is a deeply philosophical and moral challenge that will shape our future.