In the unfolding age of robotics, autonomous machines are becoming an integral part of our lives. Whether it’s self-driving cars navigating city streets or AI-powered assistants enhancing productivity, robots are quickly growing beyond the realm of science fiction into everyday reality. However, with such rapid advancements come pressing questions, not just about technology and efficiency, but about ethics. How do we ensure that these autonomous machines make decisions that are aligned with our moral values and societal norms? And more critically, how can we design systems that not only obey rules but also recognize and respect human dignity, privacy, and safety?

This article delves into the multifaceted issue of robot ethics, exploring the challenges, the current progress, and potential future directions. In doing so, we will examine key ethical principles, technological hurdles, and societal implications that need to be addressed as robots continue to evolve and integrate into the fabric of human life.

Understanding Robot Ethics: The Foundations

To understand how to ensure robot ethics, we first need to define what we mean by “ethics” in the context of robots and AI. Ethics involves the study of moral principles that govern a person’s behavior or the conducting of an activity. For robots, this can be translated into a set of rules, guidelines, or codes that govern how they behave and interact with humans and the environment. Unlike traditional machines, autonomous robots can make decisions on their own without human intervention. These decisions can directly impact human lives, so it’s crucial that their ethical considerations are deeply integrated into their design and operation.

The challenge arises because human ethics is not universally agreed upon. Different cultures, legal systems, and belief systems may interpret what is considered “right” or “wrong” differently. As a result, the ethical frameworks that govern autonomous machines must be flexible, adaptable, and capable of respecting diverse perspectives.

Key Ethical Principles for Autonomous Machines

Several ethical principles must guide the development and behavior of autonomous machines. These principles form the foundation of ethical decision-making in robots:

1. Beneficence and Non-maleficence: “Do Good, Do No Harm”

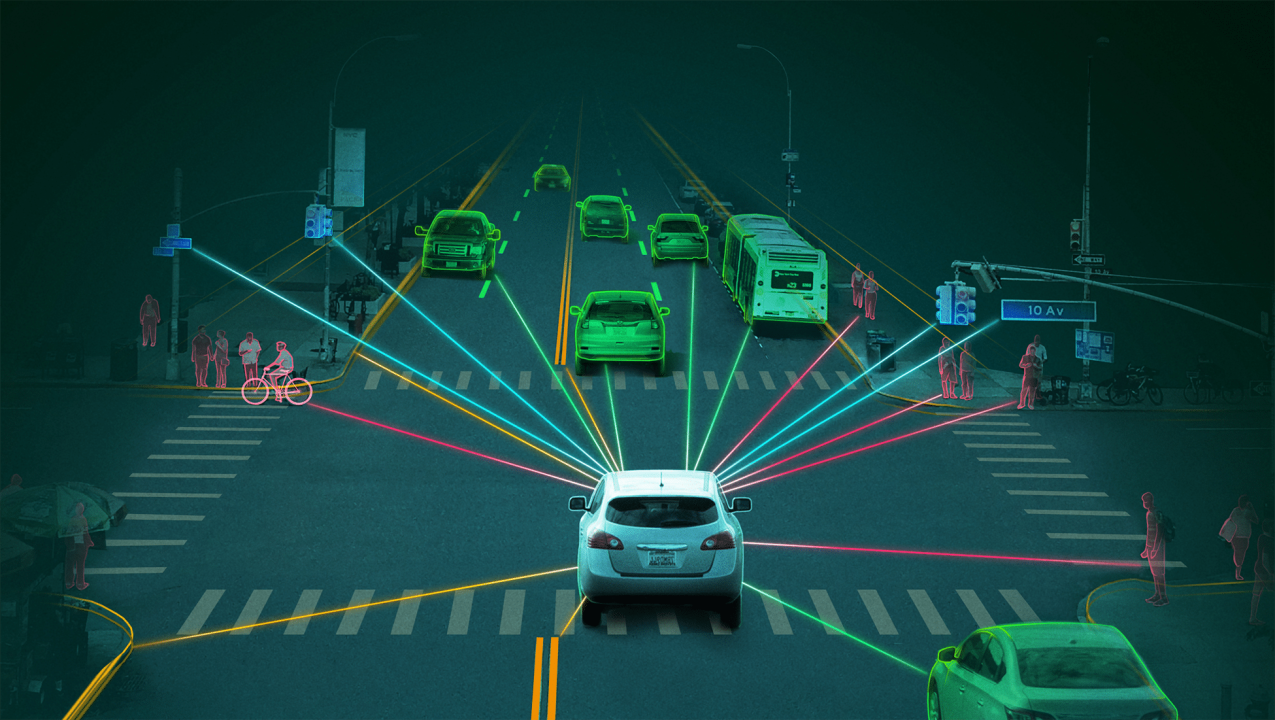

This principle asserts that robots should aim to benefit humans and society while avoiding harm. In the case of autonomous vehicles, for example, the “do no harm” aspect would mean that the vehicle should avoid causing accidents that could harm pedestrians, passengers, or other drivers.

The ethical dilemma of “trolley problem” often arises in these discussions: If an autonomous car faces a choice between swerving to avoid hitting one person but causing harm to another, how should it decide? Should it minimize the number of casualties, or prioritize the safety of the car’s occupant? While there is no definitive answer, the development of ethical guidelines that consider all possible outcomes and scenarios is crucial to reducing harm while maximizing benefit.

2. Justice and Fairness: “Treat Everyone Equally”

A core ethical value is fairness. Autonomous machines should be programmed to act in ways that promote fairness and equity. This includes ensuring that algorithms do not reinforce existing biases or discrimination. For example, facial recognition technology used in robots or AI systems must be trained on diverse data sets to avoid racial or gender biases.

The challenge of ensuring fairness is particularly important in decision-making algorithms for autonomous systems, such as hiring bots, loan approval machines, and law enforcement tools. These systems must be transparent and capable of being audited to ensure they are not inadvertently causing harm to marginalized communities.

3. Autonomy and Respect for Human Dignity: “Let Humans Decide”

One of the fundamental principles of ethics is respect for individual autonomy. For autonomous robots, this translates into respecting human decisions and freedoms. Robots should not override human decisions unless absolutely necessary for the safety of the individual or others.

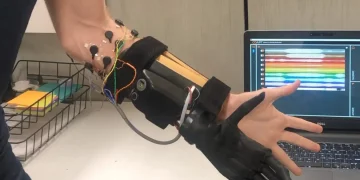

For instance, in healthcare settings, robots assisting with surgeries or rehabilitation must be designed to support human practitioners, not replace them entirely. The goal should be to empower humans, not undermine their role, thereby maintaining human dignity and ensuring that technology serves rather than replaces human judgment.

4. Transparency and Accountability: “Know How and Why Decisions Are Made”

Transparency is crucial for ethical robots. We need to ensure that autonomous machines are not black boxes, but rather systems whose decision-making processes are understandable and traceable. In situations where robots make decisions that affect human lives, such as in medical diagnostics or autonomous driving, the ability to explain how a machine arrived at a particular conclusion is essential for trust and accountability.

Accountability means that humans must be responsible for the actions of autonomous machines. If a robot makes an unethical decision, there must be a clear process in place to hold the responsible parties (whether developers, manufacturers, or operators) accountable for the consequences of the machine’s actions.

Technological Challenges in Ensuring Robot Ethics

The path to integrating ethics into autonomous machines is paved with numerous technological hurdles. While much progress has been made, there are still significant challenges in ensuring that robots behave in ethical ways.

1. Programming Ethical Decision-Making

One of the greatest challenges is how to program machines to make ethical decisions. While human ethics are subjective and often context-dependent, machines are inherently logical and rule-based. Teaching robots to navigate complex ethical dilemmas requires an enormous amount of data and context, and even then, there are no guarantees that the decisions made will align with human values.

For example, when autonomous vehicles are confronted with a scenario where a crash is inevitable, how should the vehicle decide whom to harm or protect? Some AI researchers are working on developing ethical decision-making models that can prioritize different moral considerations in such situations, but these models still face significant criticism and refinement.

2. Bias in Machine Learning Models

Another key challenge is the potential for bias in the algorithms that govern robot behavior. Most machine learning models used in autonomous systems are trained on large data sets. However, if the data sets themselves are biased (e.g., underrepresenting certain populations), the machine can learn and perpetuate those biases in its decisions. This is especially problematic in areas such as facial recognition, criminal justice, and hiring, where biased decisions can have serious real-world consequences.

Researchers are working to develop better techniques for detecting and mitigating bias in machine learning algorithms. These efforts include more diverse training data, fairness constraints in algorithmic models, and auditing systems that can identify and correct biased outcomes.

3. Adapting to Unforeseen Situations

Autonomous robots must be able to handle unexpected or unprecedented situations. This is especially true for robots that interact with the real world, where new scenarios can emerge that have not been considered during the development phase. This unpredictability makes it challenging to predict how a robot might behave in all circumstances, let alone ensure that its actions will always align with ethical principles.

For example, a robot designed for disaster response must be able to make decisions about resource allocation, life-saving actions, and prioritization of human needs in ways that might not be fully predictable in advance. AI systems need to be designed with a level of adaptability that allows them to learn from experience while remaining within ethical boundaries.

Legal and Social Implications of Robot Ethics

The ethical development of autonomous machines is not just a technical issue; it is also a deeply social and legal challenge. As robots become more autonomous, society will need to address important questions regarding liability, rights, and regulation.

1. Legal Liability

When a robot makes a mistake that causes harm, who is responsible? Is it the manufacturer, the developer, the operator, or the robot itself? The legal system must evolve to account for these new complexities. One possibility is that developers and manufacturers will be required to ensure their robots meet certain safety and ethical standards. However, as robots become more independent, the lines of responsibility become increasingly blurred.

2. Regulation and Standards

In the absence of universally accepted ethical guidelines for autonomous machines, regulatory bodies around the world must work to create frameworks that ensure robots are designed and deployed in ways that benefit society while minimizing harm. Standards for safety, fairness, transparency, and accountability will need to be codified to govern the development of robots in various sectors, including healthcare, transportation, and law enforcement.

3. Impact on Employment and Social Structures

Robots and AI systems are poised to revolutionize industries, but they also bring the potential for massive job displacement. This creates ethical questions about how society will adapt to the changes brought on by automation. Should we provide universal basic income to those displaced by robots? How can we ensure that the benefits of robotics are distributed equitably across society? These are questions that will require thoughtful ethical consideration and action.

The Road Ahead: Moving Toward Ethical Robots

As we continue to develop more advanced autonomous machines, ensuring that these robots behave ethically will require ongoing collaboration between engineers, ethicists, policymakers, and society at large. The principles outlined above must serve as the foundation for a new era of responsible robot design, one that respects human dignity, promotes fairness, and minimizes harm.

The integration of ethical considerations into robotics isn’t just a matter of coding a set of rules into machines. It involves addressing complex questions about human values, societal priorities, and the very nature of decision-making in an autonomous world. As robots become more capable, it is essential that they do so in ways that align with the best of human ethics, and that we, as a society, take responsibility for guiding their development.